Blogs

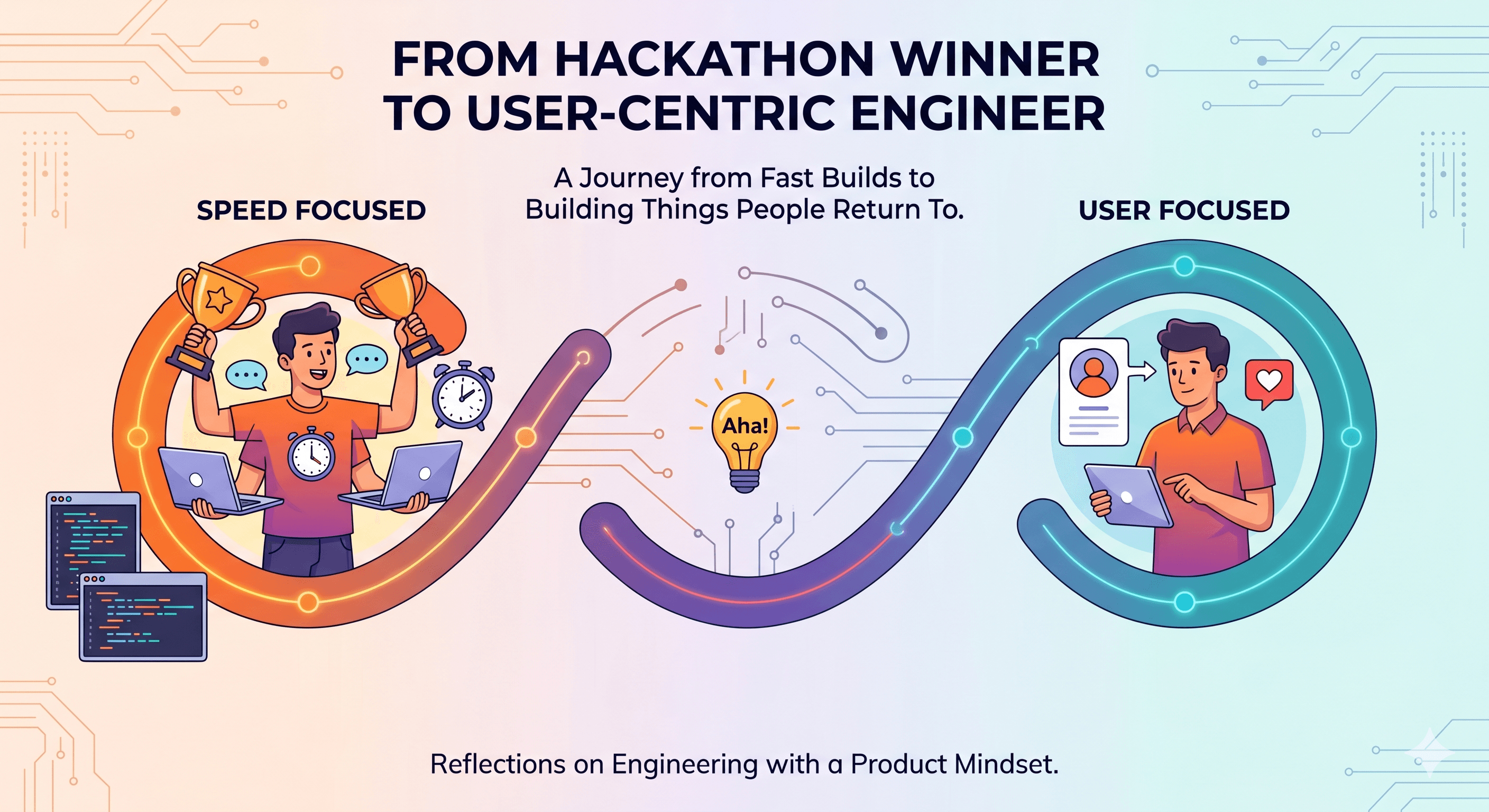

How Building a Startup Changed How I Work as an AI Engineer

April 27, 2026

From building projects to building products: My journey

artificial intelligenceaistartupmachine learningaiml

Building SmallGPT - Recreation of GPT2

March 11, 2026

A GPT-style transformer language model built from scratch in PyTorch, trained on OpenWebText with GPT-2 tokenization. The project demonstrates the full LLM pipeline including tokenization, transformer architecture, training optimization, and autoregressive text generation.

artificial intelligencelarge language modelstransformersnatural language processingpytorch

I AM a failure

February 13, 2026

I failed many times in life. But that doesn't stop me.

failurelearning from failurestudent entrepreneur journeystartup failurehackathon lessonsrejected interviewsbuild in publicship fastproduct thinkinggrowth mindsetpersonal growthdeveloper lifecollege to careerresilienceiterationembracing failurereal talkmaker journeyindie hackerearly stage builderimperfect actionclarity over knowledgelessons from rejectionshipping over perfectionfinding your path